Running a tracking platform means a lot happens behind the scenes that is vitally important but rarely obvious. In fact, it’s the kind of work you only notice when it isn’t there.

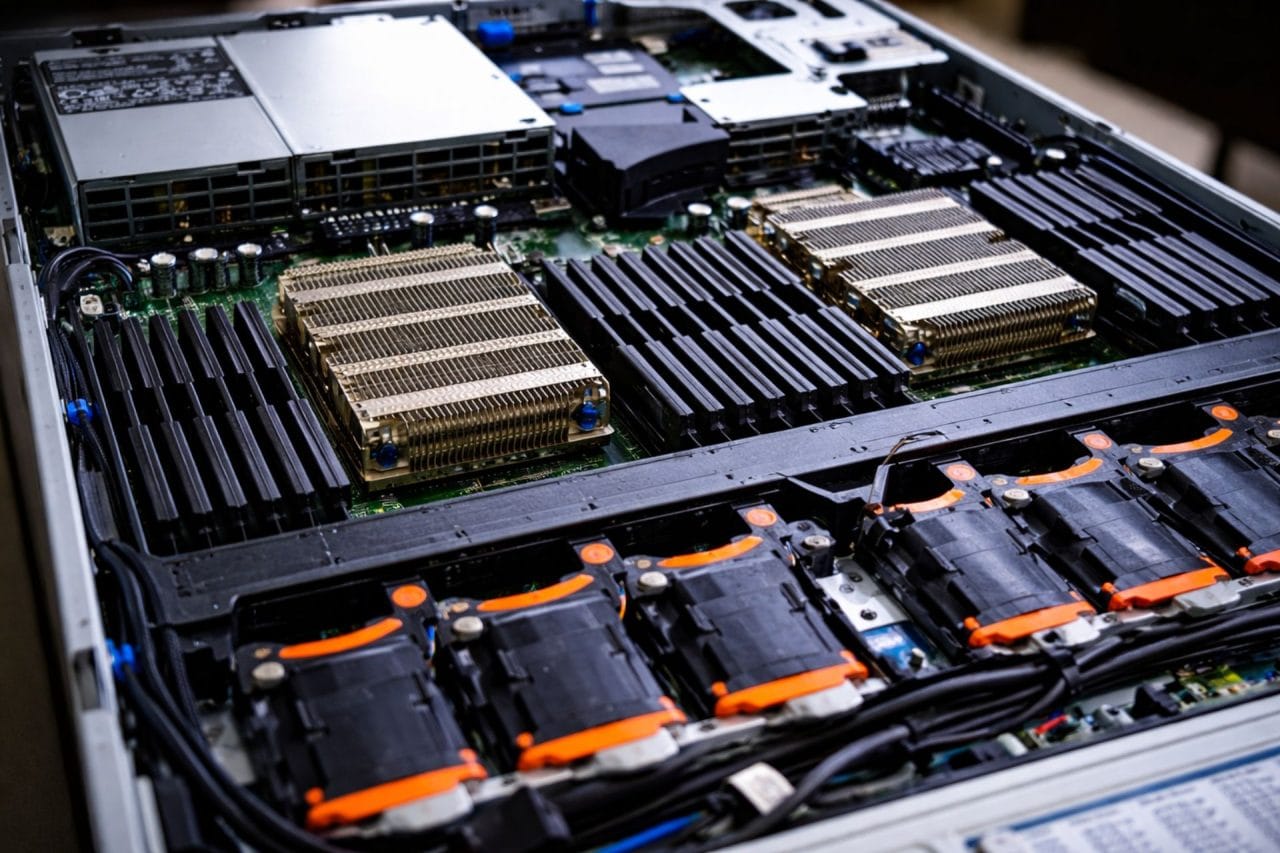

Backup servers, processing threads and decentralised systems aren’t glamorous (or cheap), but they are essential.

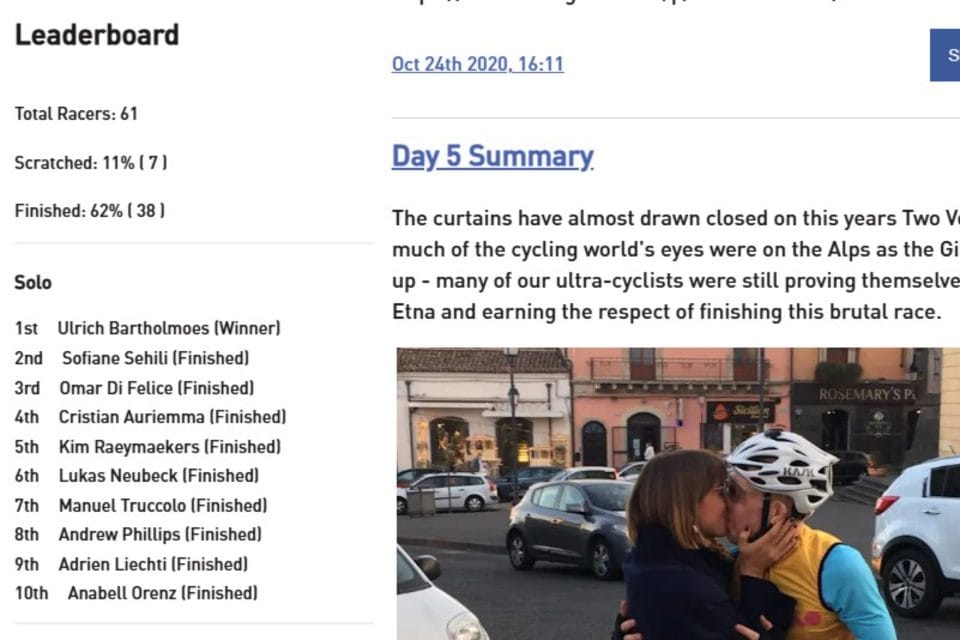

Over the past months, we’ve been strengthening the foundations of Follow My Challenge to make the platform even more stable, resilient and future-proof. For those interested, here’s what we’ve been working on – with a few event photos added to break up the technical detail.

Additional GPS Server (N+1 Setup)

We already operate multiple web servers to host our maps. You may occasionally notice this when a URL changes between environments.

To complement this, we’ve now installed a second GPS server, acting as a live backup to our primary tracking server and adding another layer of redundancy.

In the past two years, our GPS server has only experienced very minor downtime (once or twice for short periods), but adding this N+1 redundancy ensures we’re protected even against rare failures. This is about professional-grade reliability.

What does this mean?

If the primary GPS server becomes unavailable, tracking devices can automatically switch to the backup server and continue sending data. This happens without dotwatchers or organisers noticing disruption. The system detects issues and redirects processing accordingly.

Smarter Data Syncing

We have optimised the import process across our multi-server environment. Tracking data has always been presented in chronological order; by transitioning to timestamp-based sequencing during import, we’ve improved efficiency and strengthened data synchronisation across distributed servers.

What does this mean?

- Seamless switching between primary and backup servers

- No risk of mismatched record numbers

- Faster and more efficient updates

- Automatic re-synchronisation if participant data is cleared

As events grow in size and tracker intervals decrease, efficient processing becomes increasingly important.

Decentralised Processing

Previously, one server was responsible for triggering updates across others. While simple, this created unnecessary dependency.

We’ve redesigned this architecture so that each server independently determines which live maps it should update. Processing is now decentralised, and each server maintains a local fallback configuration.

What does this mean?

In short: no single point of failure.

If one service goes offline, others continue running normally. The system is more complex internally – but significantly more resilient externally.

Performance Upgrade

Our primary web server – the workhorse at the centre of our multi-server platform – has undergone a major upgrade, including a 50% increase in processing threads. Equipped with dozens of CPU cores and triple-digit gigabytes of memory, it’s built to handle sustained peak loads with ease.

As the platform grows, it’s critical that infrastructure stays ahead of demand rather than reacting to it.

What does this mean?

The server can:

- Handle more simultaneous visitors

- Process live updates more smoothly

- Reduce queueing during peak moments

- Remain responsive during large events and traffic spikes

This provides valuable headroom at exactly the moments that matter most.

Backup Improvements

In line with our broader risk-reduction strategy, we’ve expanded our backup system. Automatic backups are stored locally and synchronised to an external Network Attached Storage (NAS) device. Additional backup layers are being introduced to further strengthen protection.

What does this mean?

If hardware fails, storage becomes corrupted, or human error occurs, data can be restored quickly and safely. Backups aren’t exciting, but they are fundamental to running a resilient platform.

Power & ConnectivitY resilience

We’re also relocating certain backup services so they operate together within the same protected environment. These systems will run on an uninterruptible power supply (UPS) – complete with off-grid fallback power source – and use mobile network fallback in case of fibre outages.

What does this mean?

Even in the event of both power and fibre disruption, backup systems can continue operating — adding yet another layer of resilience in an unpredictable world.

Why This Matters

All the new features we release each year are only as strong as the infrastructure behind them. Our goal is simple:

- No single points of failure

- Automatic redundancies

- Zero manual intervention

- Maximum uptime

This N+1 approach ensures that even if hardware, power or connectivity fails, your event tracking keeps running. No system can ever eliminate risk entirely, but it can be designed to minimise it. That’s exactly what we’re continuing to do.